Best AI Models in 2026: The Complete Guide by Use Case

The AI landscape has never moved faster. What was considered a frontier AI model six months ago is already being outpaced by newer releases, and new specialized models now exist for almost every creative and professional task you can think of. Whether you are writing code, generating images, producing marketing videos, or simply trying to get better answers to complex questions, there is an AI model built specifically for what you need.

The challenge is that the best AI models are scattered across different platforms, each requiring its own account, subscription, and learning curve. This guide cuts through the noise. We cover the best AI models available in 2026 by use case, explain what makes each one stand out, and show you which platform brings the most powerful ones together so you can stop juggling tools and start creating.

- What are AI models and why do they matter?

- The best AI models in 2026 by use case

- MyEdit: The best platform combining top AI models for media creation

- AI models comparison table

- Frequently Asked Questions

What are AI models and why does choosing the right one matter?

An AI model is a trained system that takes input, whether that is text, images, audio, or video, and produces an intelligent output. Large Language Models (LLMs) like Claude and GPT-5 generate and understand text. Image generation models like Nano Banana turn written prompts into visuals. Video AI models like Kling and Veo 3.1 animate scenes from a single description or reference image.

Not all AI models are built equal, and more importantly, no single model is the best at everything. The AI field in 2026 has officially entered the era of specialization. Benchmark leaders in reasoning often fall short in creative writing. The top image generator may produce stunning visuals but struggle with accurate text rendering. The model best suited for coding agents may be completely wrong for producing a product video ad.

Choosing the right AI model for your task directly affects the quality of your output, the time you spend iterating, and the cost of your workflow. The sections below break down which models lead in each major category and why.

The best AI models in 2026 by use case

- Claude (Anthropic) - Best AI model for writing and agentic tasks

- GPT-5 (OpenAI) - Best all-around AI model

- Gemini 3 Pro (Google) - Best AI model for reasoning and data

- Nano Banana Pro (Google) - Best AI model for image generation

- Kling 3.0 and Veo 3.1 (Kuaishou / Google) - Best AI models for video creation

1. Claude (Anthropic) - Best AI Model for Writing and Agentic Tasks

Pros

- Produces the most natural, nuanced long-form prose of any model

- Supports up to 128K output tokens in a single pass

- Leads SWE-bench coding benchmarks alongside top competitors

- Excels at multi-step agentic workflows and complex reasoning

Cons

- API pricing is higher than some competitors at the frontier tier

- No built-in real-time web search in base chat mode

Key strengths

- Long-form writing: articles, reports, scripts, and documentation with minimal editing needed

- Code generation and architectural planning across all major languages

- Multi-step agentic tasks: Claude can operate autonomously through long workflows

- Complex reasoning: breaks down ambiguous problems into clear, structured answers

- Context retention across very long conversations and document inputs

Best for: Content writers, developers, researchers, and teams building AI agents

Access: claude.ai, Claude API, Claude Code (terminal), Claude for VS Code

Top model: Claude Opus 4.6 (flagship), Claude Sonnet 4.6 (balanced speed and quality)

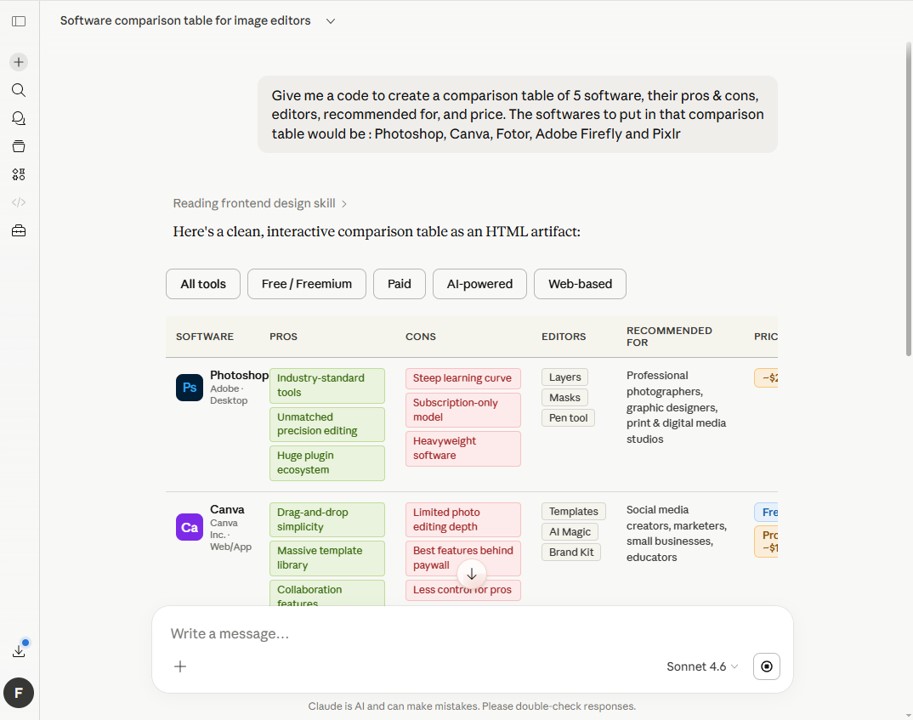

Why Claude leads for writing

Among all the frontier AI models tested in 2026, Claude consistently produces the most readable, naturally flowing prose. It understands tone, adapts its voice to context, and avoids the mechanical repetition that other models fall into on long outputs. For anyone producing significant volumes of written content, whether marketing copy, technical documentation, or creative storytelling, Claude is the model that requires the least editing afterward.

On the coding side, Claude Opus 4.6 sits at the top of SWE-bench Verified alongside GPT-5 variants, and it powers the two most widely used AI coding editors on the market. For developers who need a model that can understand an entire codebase, plan a refactor, and execute it without losing context, Claude is the go-to choice.

2. GPT-5 (OpenAI) - Best All-Around AI Model

Pros

- Largest ecosystem: plugins, integrations, and third-party tools

- Internal routing selects the best sub-model for each request automatically

- Canvas editing environment is the best for iterative document work

- Competitive performance across every benchmark category

Cons

- Can feel less nuanced than Claude on creative long-form writing

- Full capabilities require the Pro tier at $20 per month

Key strengths

- Automatic model routing: GPT-5 selects the right internal model for each task in real time

- Multimodal understanding: text, images, code, files, and data in one interface

- Canvas environment for collaborative document writing and editing

- DALL-E integration for image generation directly in chat

- Massive third-party integration library via the GPT Store

Best for: Everyday users, business professionals, and teams that want one tool for everything

Access: ChatGPT web, iOS, Android, API

Top model: GPT-5.4 Pro (frontier), GPT-5.1 Instant (fast and affordable)

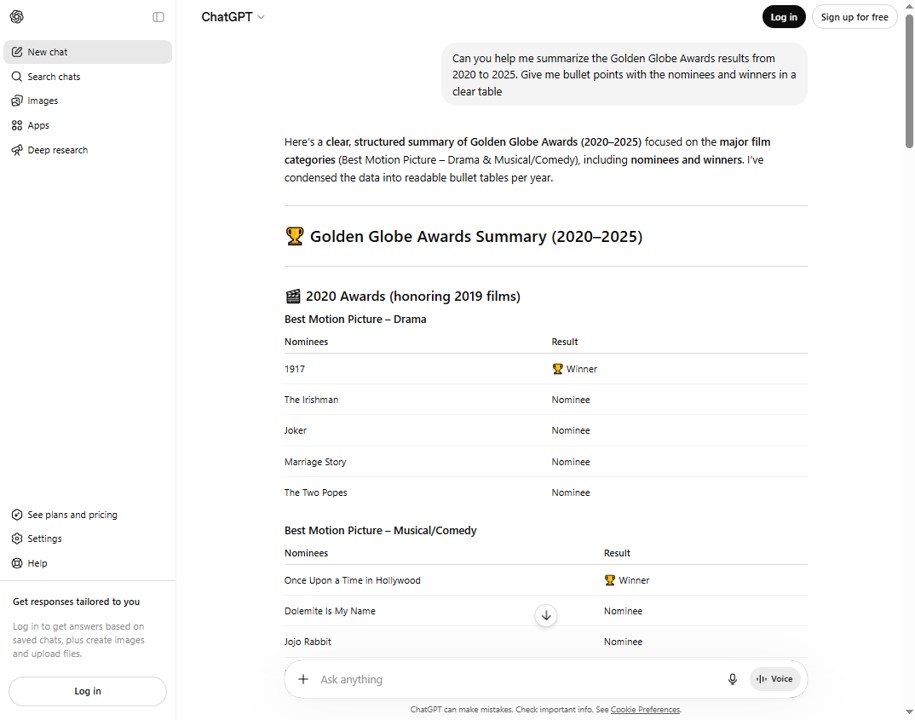

Why GPT-5 leads for general use

GPT-5 is the model most people reach for first, and for good reason. Its internal routing system means you do not have to think about which version to use for which task. Writing a quick email, analyzing a spreadsheet, generating an image, explaining a complex concept: GPT-5 handles all of it from one interface. The GPT Store also gives access to thousands of specialized agents and integrations that extend what the model can do out of the box.

For businesses that want a single AI tool that covers the widest range of everyday tasks without switching platforms, GPT-5 is the safest and most versatile starting point in 2026.

3. Gemini 3 Pro (Google) - Best AI Model for Reasoning and Data Analysis

Pros

- 1 million token context window: processes entire codebases or document libraries

- Top-ranked on math, science, and complex reasoning benchmarks

- Native multimodal processing: text, images, audio, video, and PDFs simultaneously

- Direct integration with Google Workspace, Sheets, and Analytics

Cons

- API pricing increased with the Gemini 3 generation

- Community feedback on consistency and billing has been mixed

Key strengths

- 1M token context window: ideal for large-scale data analysis and document processing

- Benchmark leader on AIME math (95.0%), GPQA science, and complex multi-step reasoning

- Processes text, images, audio, video, and PDFs in a single unified request

- Deep Workspace integration for real-time analysis inside Google Sheets and Docs

- Powers Nano Banana image generation natively within the Gemini ecosystem

Best for: Researchers, data analysts, scientists, and developers working with large context inputs

Access: Gemini app, Google AI Studio, Vertex AI API

Top model: Gemini 3.1 Pro (current frontier), Gemini 3.1 Flash (fast and cost-efficient)

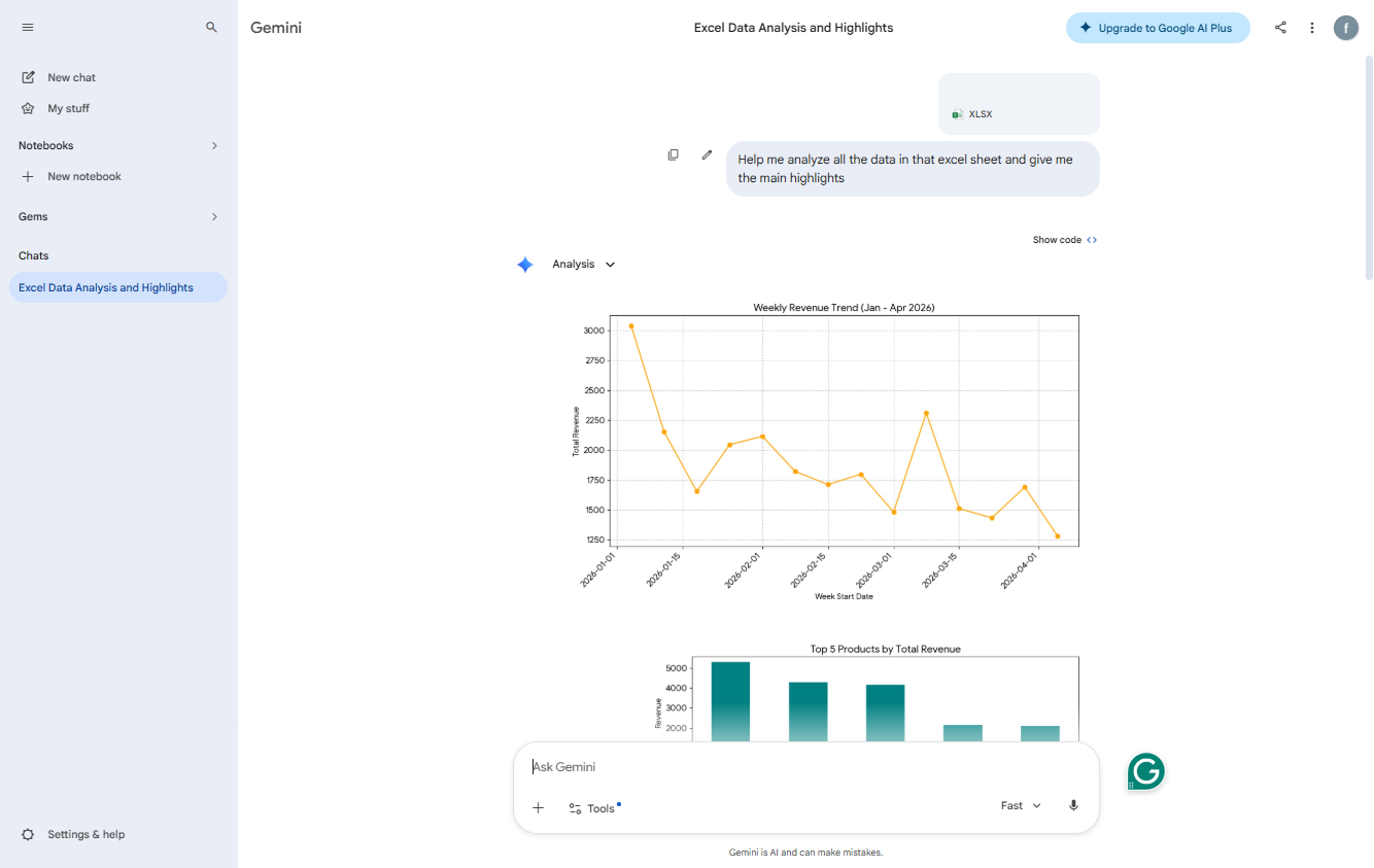

Why Gemini 3 Pro leads for reasoning and data

When the task involves processing enormous amounts of information at once, Gemini 3 Pro has a structural advantage that other models cannot match: its 1 million token context window. This means you can feed it an entire codebase, a year's worth of financial reports, or a full research paper library and ask questions across all of it in one pass. No chunking, no summarizing, no loss of detail across documents.

On pure reasoning benchmarks, Gemini 3 Pro consistently scores at or near the top across math, science, and multilingual tasks. For organizations that live inside the Google ecosystem and need AI that plugs directly into their existing data workflows, it is the most naturally integrated option available.

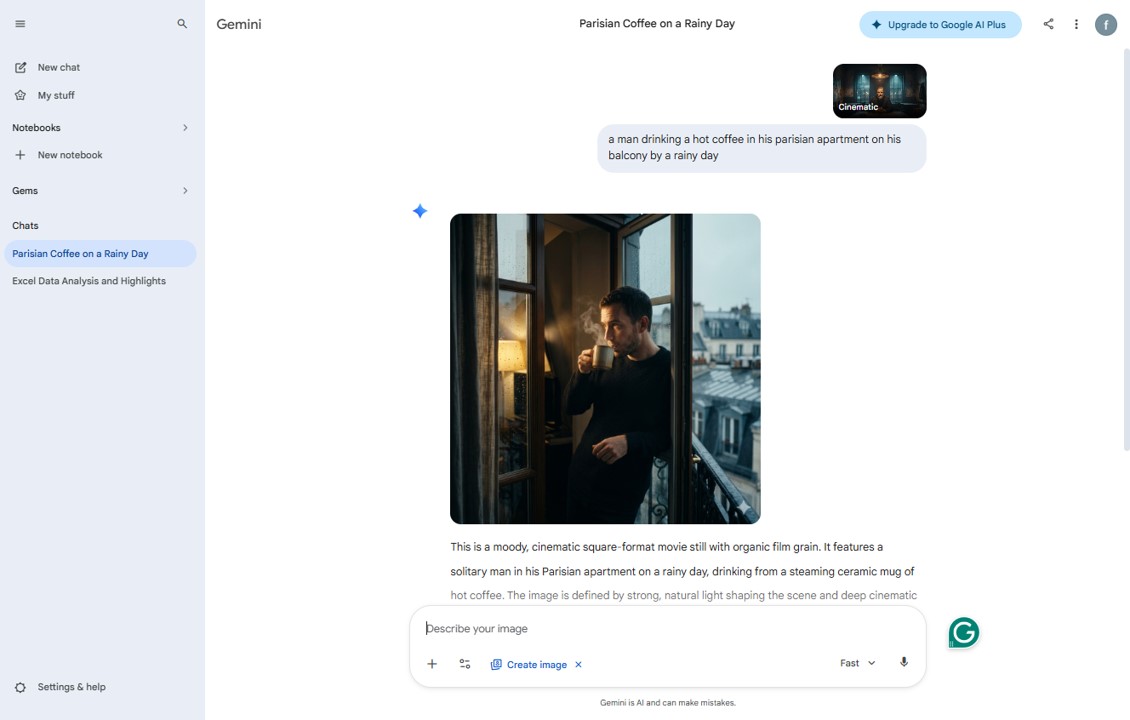

4. Nano Banana Pro (Google) - Best AI Model for Image Generation

Pros

- Generates and edits images through natural language conversation

- Maintains character consistency across up to 5 characters and 14 objects

- Outputs up to 4K resolution with vibrant lighting and sharp detail

- Powered by Gemini 3 Pro Image: best-in-class reasoning applied to visuals

Cons

- Available through Gemini app and Google products, not as a standalone tool

- Some advanced features require a Google AI Ultra or Pro subscription

Key strengths

- Text-to-image and image editing from a single conversational interface

- 4K resolution output with realistic lighting, richer textures, and sharp detail

- Character consistency: maintain identity across multiple frames and edits

- Accurate text rendering within images: useful for posters, ads, and marketing materials

- Iterative chat-based editing: refine a generated image through follow-up prompts

- SynthID watermarking for transparency on AI-generated content

Best for: Marketers, designers, content creators, and e-commerce teams producing visual assets

Access: Gemini app, Google AI Studio, Google Ads, Workspace, MyEdit

Top model: Nano Banana Pro (Gemini 3 Pro Image), Nano Banana 2 (fast, Gemini 3.1 Flash Image)

Why Nano Banana Pro leads for image generation

Google launched the original Nano Banana in August 2025 to immediate popularity, with millions of images generated within the first weeks of release. The Pro version, built on Gemini 3 Pro Image, raised the bar further: studio-quality control, high-resolution output up to 4K, breakthrough text accuracy inside images, and the ability to maintain facial identity and stylistic consistency across complex multi-character edits.

What sets Nano Banana apart from competing image models is its chat-based, iterative approach. Rather than generating a single image and starting over when you want changes, you can describe refinements in natural language and the model applies them while preserving the rest of the composition. For commercial use cases where precision matters, such as product photography, ad visuals, and marketing materials, this level of control is a significant practical advantage.

5. Kling 3.0 and Veo 3.1 - The Best AI Models for Video Creation

Pros

- Both models generate native audio alongside video in a single pass

- Kling 3.0 supports native 4K output and multi-shot storyboarding

- Veo 3.1 leads on photorealism, physics simulation, and audio fidelity

- Both support image-to-video and text-to-video workflows

Cons

- Professional-quality outputs cost more per generation than basic alternatives

- Per-generation clip lengths are still limited to 10 to 15 seconds without chaining

Key strengths of Kling 3.0

- Native 4K HDR output with no upscaling or post-processing required

- Multi-shot storyboarding: up to 6 camera cuts with consistent characters in a single generation

- Director-level camera control: dolly zoom, crane shots, tracking, handheld styles

- Native audio generation with multilingual dialogue and lip-sync

- Cost-effective at scale: roughly $0.50 per clip for high-volume production teams

Key strengths of Veo 3.1

- Photorealistic physics: fluid dynamics, cloth motion, and particle effects with real-world accuracy

- Industry-leading native audio: 48kHz synchronized dialogue, ambient sound, and music in one pass

- Up to 4 reference images for precise character and style control

- Native vertical (9:16) support for Shorts, TikTok, and Reels

- Deep Google integration: works within Gemini, Vertex AI, and Google Flow

Best for: Creators, marketers, filmmakers, agencies, and brands producing social and commercial video content

Access: Kling AI platform, MyEdit, Veo via Google Vertex AI and Flow, MyEdit

Why these two lead the AI video category

AI video generation reached a turning point in 2025: from blurry experimental clips to production-grade footage with synchronized audio. By 2026, Kling 3.0 and Veo 3.1 represent the quality ceiling of what is available. Independent benchmark tests across more than 100 prompts show Kling 3.0 leading overall ELO rankings, with particular strength in camera movement fidelity and multi-shot storytelling. Veo 3.1 leads in audio synchronization quality and photorealistic physics simulation, making it the preferred choice for premium commercial and cinematic work.

For most creators, the practical question is not which model is objectively better, but which one fits the workflow and budget for a given project. The good news is that both are now accessible through unified platforms that let you switch between them without managing separate accounts.

MyEdit: The Best Platform for Combining Top AI Models for Media Creation

Pros

- Access to Nano Banana Pro, Kling 3.0, and Veo 3.1 from one platform

- Unified workflow: go from image generation to video creation without switching tools

- Built specifically for creators, marketers, and media production teams

- Covers the full creative pipeline: text prompts, image generation, editing, and video output

Cons

- Need to create an account

Access to the best AI models in 2026 is one thing. Having them work together inside a single platform designed for media creation is another entirely.

MyEdit is the platform that brings Google's Nano Banana Pro, Kuaishou's Kling 3.0, and Google DeepMind's Veo 3.1 together in one unified workspace, specifically designed for creators, marketers, and businesses producing professional visual and video content.

What makes MyEdit different from other AI platforms

Most platforms give you access to a single AI model and ask you to figure out the rest. MyEdit is built around the insight that real creative workflows require more than one model, and that stitching those models together yourself wastes the time you saved using AI in the first place.

With MyEdit, you can generate a product image using Nano Banana Pro, refine it with AI background replacement and object removal, then pass it directly into Kling 3.0 or Veo 3.1 to produce a cinematic marketing video, all without leaving the platform or re-uploading your files. The result is a creative pipeline that previously required three or four separate subscriptions, now consolidated into one browser-based workspace.

The AI models powering MyEdit media creation

Nano Banana Pro (Image Generation and Editing)

MyEdit integrates Nano Banana Pro to handle all image creation and editing needs. Generate product visuals, marketing graphics, and lifestyle imagery from a text prompt. Refine existing photos with conversational edits that preserve composition and character consistency. Output up to 4K resolution for print, commercial display, and high-end digital campaigns.

Kling 3.0 (Cinematic Video for Social and Commercial Content)

Kling 3.0 inside MyEdit gives you native 4K video generation with multi-shot storyboarding, director-style camera controls, and native audio in multiple languages. For brands producing short-form social content, product ads, or concept previsualization at scale, Kling 3.0 offers the best cost-to-quality ratio at the frontier.

Veo 3.1 (Premium Cinematic and Audio-Visual Production)

When the project demands photorealistic physics, flawless audio synchronization, and broadcast-quality output, Veo 3.1 is available inside MyEdit for exactly that. From product demo videos with precise lighting simulation to cinematic brand stories with environment-matched ambient audio, Veo 3.1 handles the work that used to require a professional production team.

Best AI models comparison table

| AI Model | Best For | Developer | Top Category | Media Creation | Available on MyEdit |

|---|---|---|---|---|---|

| Claude Opus 4.6 | Writing, coding, agents | Anthropic | Long-form writing / Coding | Text only | No |

| GPT-5 (OpenAI) | General use, everyday tasks | OpenAI | All-around performance | Text + Image (DALL-E) | No |

| Gemini 3 Pro | Reasoning, data, science | Math / Reasoning | Text + Multimodal | No | |

| Nano Banana Pro | Image generation and editing | Google DeepMind | AI Image generation | Image up to 4K | Yes |

| Kling 3.0 | Social and commercial video | Kuaishou | AI Video (4K, multi-shot) | Video + Audio | Yes |

| Veo 3.1 | Cinematic and premium video | Google DeepMind | AI Video (physics, audio) | Video + Audio | Yes |

* MyEdit integrates Nano Banana Pro, Kling 3.0, and Veo 3.1 in one unified media creation platform. Claude and GPT-5 are accessible through their own native products.

Frequently Asked Questions - Best AI Models

AI models are trained software systems that take inputs such as text, images, audio, or video and produce intelligent outputs. Large Language Models (LLMs) like Claude and GPT-5 generate and understand natural language. Multimodal AI models like Gemini 3 Pro process multiple content types at once. Specialized AI models like Nano Banana Pro focus on image generation, while video AI models like Kling 3.0 and Veo 3.1 produce cinematic footage from text or image prompts. The best AI model for any task depends on what you are trying to create.

The best AI models in 2026 depend on your use case. For writing and coding, Claude Opus 4.6 (Anthropic) leads on prose quality and agentic task performance. For general everyday use, GPT-5 (OpenAI) offers the broadest versatility and ecosystem. For reasoning and data analysis, Gemini 3 Pro (Google) tops math and science benchmarks with its 1 million token context window. For AI image generation, Nano Banana Pro (Google DeepMind) leads with 4K output and iterative chat-based editing. For video creation, Kling 3.0 and Veo 3.1 represent the current quality ceiling for AI-generated cinematic footage with native audio.

Nano Banana Pro is the best AI model for image generation in 2026. Built on Google's Gemini 3 Pro Image model, it produces outputs up to 4K resolution with vibrant lighting, sharp detail, and accurate text rendering inside images. Its key differentiator is iterative, chat-based editing: you can describe refinements in natural language and the model applies them while preserving character consistency and composition. It is available through the Gemini app, Google Workspace, and creative platforms like MyEdit.

The two best AI models for video creation in 2026 are Kling 3.0 (from Kuaishou) and Veo 3.1 (from Google DeepMind). Kling 3.0 leads on native 4K output, multi-shot storyboarding, and cost-effective high-volume production. Veo 3.1 leads on photorealistic physics simulation, native audio quality, and broadcast-level cinematic output. Both generate synchronized audio alongside video in a single pass. For most creators and marketing teams, the best option is a platform like MyEdit that gives you access to both models in one workflow.

Yes. Nano Banana is Google's AI image generation model, first released in August 2025 as part of the Gemini model family. The original version is powered by Gemini 2.5 Flash Image. Nano Banana Pro (launched November 2025) is built on Gemini 3 Pro Image and offers studio-quality resolution, advanced character consistency, and precise text rendering. Nano Banana 2, released in February 2026, runs on Gemini 3.1 Flash Image and balances the quality of the Pro model with faster generation speeds. All versions are accessible through the Gemini app and integrated platforms like MyEdit.

MyEdit is the platform that combines the most powerful AI models for media creation in one place. It integrates Nano Banana Pro for image generation and editing, Kling 3.0 for high-volume cinematic video production, and Veo 3.1 for premium photorealistic video with native audio. Rather than managing separate subscriptions and accounts across three different providers, MyEdit gives creators, marketers, and e-commerce teams access to all three in a unified browser-based workspace with no software to install.

Neither model is universally better: they lead in different areas. Claude (Anthropic) produces more natural, nuanced long-form writing and leads on agentic coding benchmarks, making it the preferred choice for writers and developers who need minimal editing on complex outputs. GPT-5 (OpenAI) covers a wider range of everyday tasks from a single interface, has a larger third-party integration ecosystem, and is often considered the more versatile general-purpose tool. The right choice depends on your primary use case: writing and coding tasks favor Claude, while broad everyday use and ecosystem access favor GPT-5.

Yes, through platforms like MyEdit that integrate both models in one workspace. Kling 3.0 and Veo 3.1 each have distinct strengths: Kling leads on 4K multi-shot storytelling and cost-effective scale, while Veo leads on physics realism and audio quality. Using both within the same platform means you can choose the right model for each project without switching accounts, re-uploading assets, or paying for two separate subscriptions. MyEdit makes this workflow practical for creative and marketing teams.

The CyberLink Editorial Team is composed of product testing specialists and content creation experts with over 20 years of hands-on experience and rigorous research in the multimedia editing industry. As the official voice of CyberLink, the team consistently delivers practical, easy-to-follow tutorials across video, photo, and audio editing, while highlighting AI innovations, product insights, and emerging industry trends. Their work empowers millions of creators worldwide each year to elevate their content. Beyond the blog, the team actively shares editing tips and product updates across YouTube, Facebook, Linkedin and Pinterest.

😎 Outside of their work, they enjoy capturing travel moments and meticulously editing photos and videos for their social channels.